You drop a thousand dollars on a new GPU. Frame rates are great. Then six months later, you read that chiplet designs could have given you more cores for less money. Now you feel like an idiot.

I was in that exact spot last year. Bought a high-end card right before chiplet rumors heated up. The frustration is real.

This guide fixes that. You will learn what chiplet GPUs actually are, why they matter to your build, and whether you should wait or buy today. No marketing fluff. Just the reality of where GPU design is heading and what that means for gamers in 2026.

We will dig into AMD’s chiplet approach, compare it to traditional monolithic designs, and give you practical advice for your next purchase decision. The truth is, chiplet technology changes everything about how we think about GPU upgrades.

What Chiplet GPUs Actually Are (And Why You Should Care)

Think of a traditional GPU as one giant factory on a single piece of land. Everything is built together. One flaw anywhere means scrapping the whole thing.

Chiplet design is different. You build several smaller factories and connect them with smart highways. If one factory has a defect, you only lose that piece. Not the entire production line.

That is chiplet architecture in a nutshell. Instead of one massive die doing all the graphics processing, you split tasks across multiple smaller dies on the same package. They talk to each other through high-speed interconnects like AMD’s Infinity Fabric.

How Chiplets Change Manufacturing

Chiplets solve a huge manufacturing problem. As GPUs get bigger, yields drop. Yields mean how many good chips come out of a silicon wafer. A tiny defect on a monolithic die ruins the whole chip.

With chiplets, defects only kill smaller pieces. You can mix and match good chiplets to build complete GPUs. This approach boosts yields dramatically. Better yields mean lower costs per working GPU.

![]()

AMD already proved this with CPUs. Their Ryzen processors use chiplets and crushed Intel on price-to-performance for years. Now they are bringing the same strategy to GPUs.

The Interconnect Challenge

The catch is communication. Chiplets need to share data constantly. If the interconnect is slow, you create latency. Latency kills gaming performance.

That is why chiplet GPUs took so long to arrive. AMD spent years developing Infinity Fabric technology. It needed to be fast enough to keep chiplets working like a single unified GPU.

Current consumer GPUs still use monolithic designs because interconnect speed was not good enough. But in 2026, that is changing. New patent filings show AMD working on smart switch technology to optimize memory access between chiplets.

Check Your Build Compatibility Now

Before planning a chiplet GPU upgrade, verify your current system balance. Chiplet designs can shift bottleneck dynamics in unexpected ways.

The Current Chiplet GPU Reality (Not What Marketing Tells You)

AMD’s Chiplet Journey

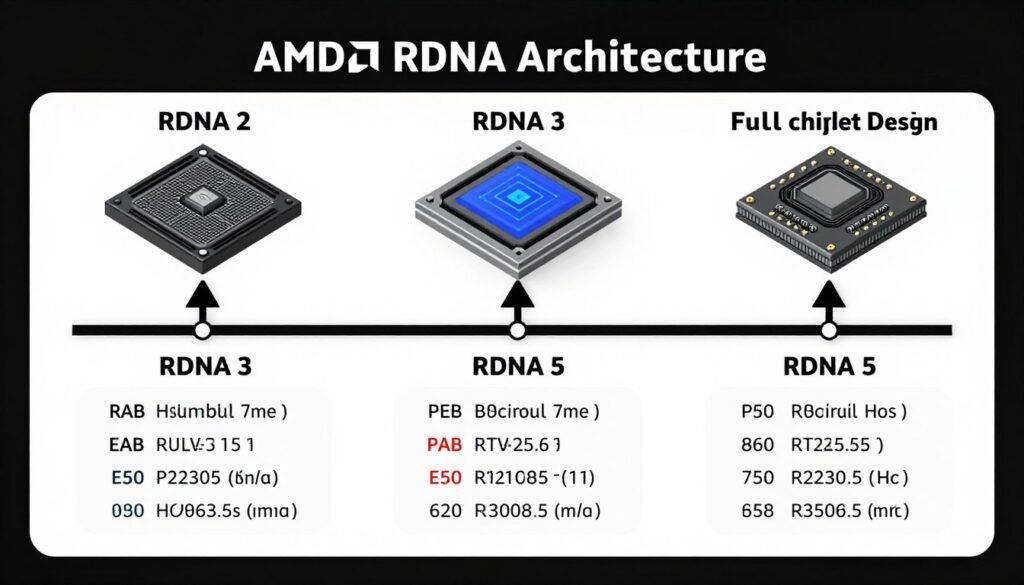

AMD tried chiplets with RDNA 3. The RX 7900 series used a hybrid approach. One main graphics die paired with memory controller chiplets. The results were mixed.

Gaming performance was good but not revolutionary. The chiplet design did not deliver the massive gains AMD promised. Latency between dies created small stutters in some titles.

That is why AMD stepped back. RDNA 4 went back to monolithic designs for consumer gaming GPUs. They needed to fix the interconnect problems first.

Where Chiplets Work Today

Chiplets shine in compute accelerators. AMD’s Instinct MI300 series uses chiplet designs for AI and data center work. Those workloads are less sensitive to latency than gaming.

The approach works because compute tasks can be split cleanly across chiplets. Video rendering, AI training, and scientific calculations do not need instant die-to-die communication like gaming does.

For gamers, chiplet GPUs are still a future technology. RDNA 5 is rumored to bring true multi-chiplet designs for gaming, but that is 2025 or later. Maybe 2026 if AMD hits targets.

NVIDIA’s Stance

NVIDIA is not rushing into chiplets for gaming GPUs. They are sticking with monolithic dies for the RTX 50-series. Their manufacturing partnership with TSMC gives them good enough yields on large dies.

But even NVIDIA is exploring chiplets for future architectures. Patents show work on multi-die designs with advanced interconnects. They just are not ready to deploy them yet.

The reality is, both companies know chiplets are coming. The question is when the technology matures enough for gaming workloads. Learn more about current GPU pairing strategies while we wait.

Performance Implications (What Benchmarks Don’t Tell You)

The Latency Tax

Every time data crosses between chiplets, you pay a latency penalty. It is like adding an extra step to every calculation. In synthetic benchmarks, this might not show up much.

But in real gaming, it matters. Frame times can become inconsistent. You might see the same average FPS but feel more micro-stutters. That is the chiplet tax.

AMD’s smart switch patent aims to fix this. The idea is routing data through the fastest path automatically. If one chiplet needs texture data, the switch finds the memory controller with the lowest latency.

Memory Bandwidth Challenges

Chiplet designs complicate memory access. With a monolithic die, all cores share one memory interface. Simple and fast.

With chiplets, memory controllers might sit on different dies. A GPU core on chiplet A needs data from memory attached to chiplet B. That adds hops and latency.

HBM memory helps. High Bandwidth Memory has much faster interconnects than GDDR6 or GDDR7. That is why data center chiplet GPUs use HBM. But HBM is expensive. Too expensive for consumer gaming GPUs right now.

Future designs might use GDDR7 with wider buses. Or hybrid approaches mixing memory types. The goal is keeping all chiplets fed with data fast enough to avoid stalls. Check out our guide on VRAM bottlenecks to understand memory limitations better.

Scaling Benefits

The upside is massive scalability. Want a faster GPU? Add more chiplets. Want a budget card? Use fewer chiplets. Same base design, different configurations.

This is how AMD builds Ryzen CPUs. A Ryzen 5 and Ryzen 9 use the same chiplets. Just different quantities. Apply that to GPUs and you get incredible flexibility.

Manufacturers could build one chiplet design and scale from budget to flagship by changing chiplet count. Development costs drop. Product lines simplify. Gamers get more options at better prices.

Benefits of Chiplet GPUs

- Lower manufacturing costs through better yields

- Easier scalability across product tiers

- Faster time-to-market for new architectures

- Potential for modular upgrades in the future

- Reduced waste from defective silicon

Current Limitations

- Interconnect latency affecting frame times

- Complex memory access patterns

- Higher power consumption for interconnects

- Driver optimization challenges

- Limited real-world gaming validation

For serious performance analysis of your current or planned build, understanding component synergy is crucial. Use our PC bottleneck calculator to check how chiplet GPUs might interact with your CPU and memory configuration.

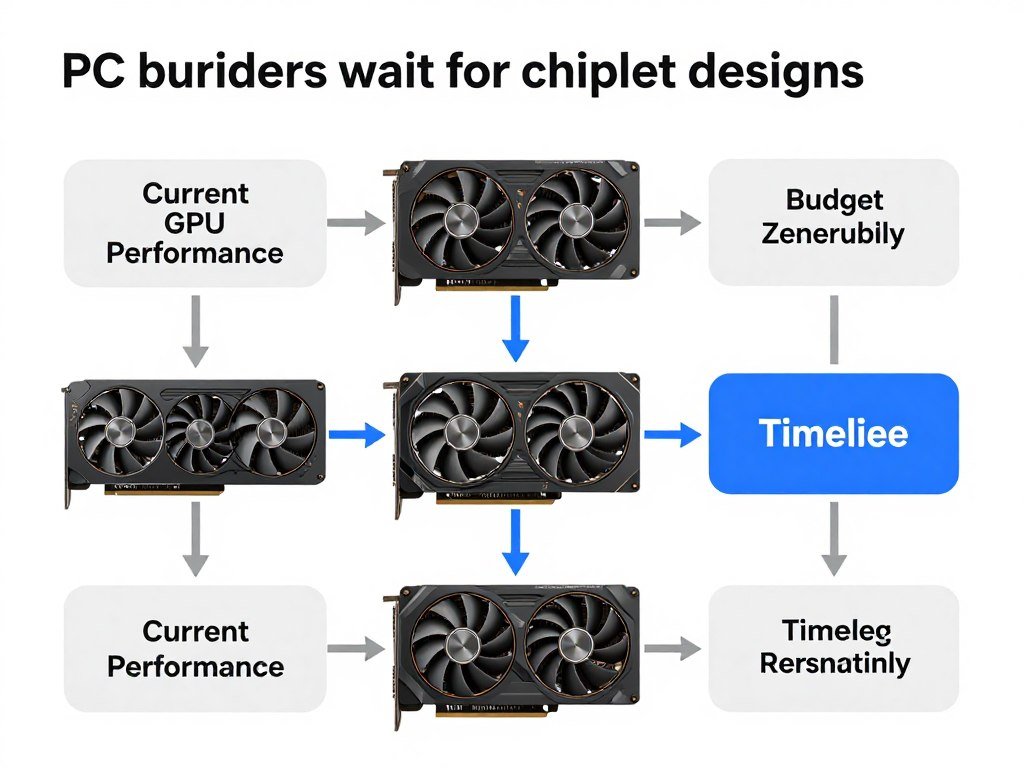

Practical Buying Advice (Should You Wait or Buy Now?)

If You Need a GPU Today

Do not wait for chiplets. The RTX 5070 and RX 8800 XT are here now. They work. They deliver solid gaming performance without chiplet complications.

Chiplet gaming GPUs are at least 12 to 18 months away. Maybe longer if AMD hits delays. That is too long to sit on integrated graphics or a dying card.

Buy what performs today. You can always sell and upgrade when chiplet designs mature. GPU resale values hold decently if you buy smart. Check our budget build guide for current recommendations.

If You Can Wait

Waiting makes sense if your current GPU handles your games fine. RDNA 5 chiplet designs could offer better price-to-performance once they launch.

But set a deadline. Do not wait forever. Tech always improves. There is always something better six months out. That is the trap.

If you are planning a full build in late 2026 or 2027, waiting could pay off. Chiplet GPUs should be stable by then. Early adopter issues will be sorted. Drivers will be mature.

System Balance Considerations

Chiplet GPUs might shift bottleneck patterns. Multi-die designs could stress CPUs differently than monolithic GPUs. Memory bandwidth requirements might change.

Before committing to a chiplet GPU build, verify your system balance. A powerful chiplet GPU paired with a weak CPU could create unexpected bottlenecks. Our system balance guide explains pairing strategies.

Also consider resolution. At 4K, GPUs do most of the work. Chiplet latency might matter less. At 1080p high refresh, CPU-GPU communication is critical. Latency issues could show up more. Read about resolution bottlenecks for details.

Future-Proofing Thoughts

Chiplets enable modular designs. In theory, future GPUs could use swappable chiplet modules. Upgrade your graphics cores without replacing memory controllers.

That is speculation. No manufacturer has committed to modular consumer GPUs. But the architecture supports it. If it happens, chiplet GPUs become a better long-term investment.

For now, buy for your current needs. Future-proofing is mostly a myth in PC building. Performance needs change. Software evolves. What seems future-proof today is obsolete in three years.

Technical Deep Dive (For the Hardware Nerds)

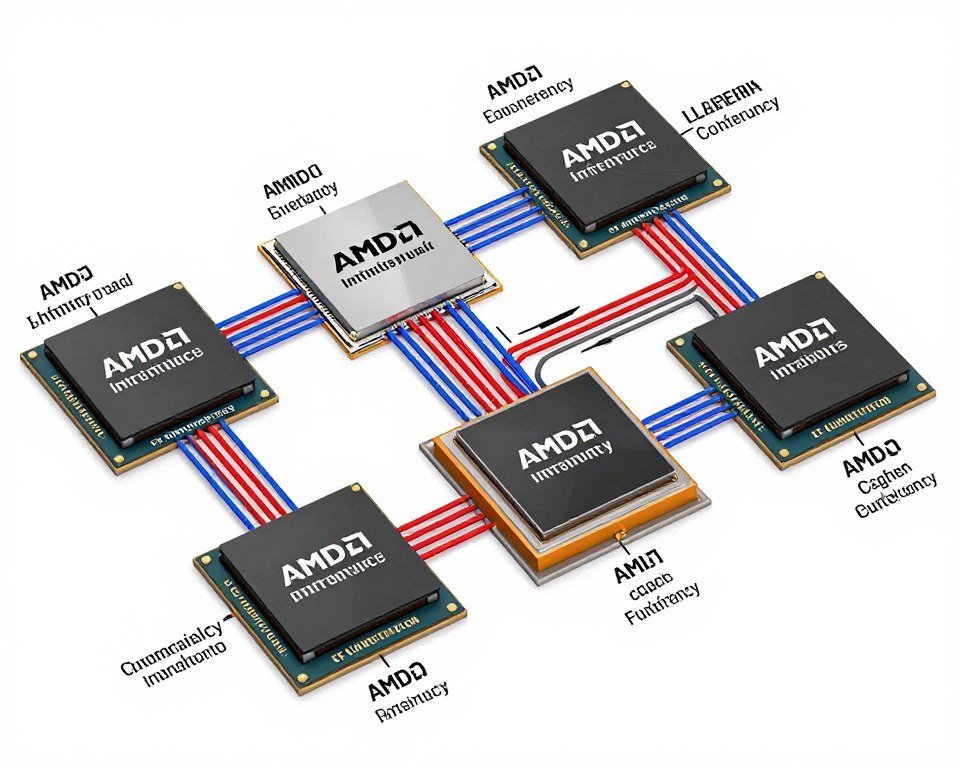

Infinity Fabric Evolution

AMD’s Infinity Fabric is the glue holding chiplets together. It started with Ryzen CPUs. First-gen Infinity Fabric had latency issues. Memory performance suffered.

AMD iterated. Infinity Fabric 2.0 improved speeds. Latency dropped. Ryzen 3000 and 5000 series CPUs showed massive gains. The lesson: interconnects get better over time.

For GPUs, AMD is developing specialized Infinity Fabric variants. Higher bandwidth. Lower latency. Optimized for graphics workloads instead of CPU tasks.

Smart Switch Technology

AMD’s new patent describes a smart switch managing chiplet communication. Think of it as a traffic controller for GPU data.

The switch monitors which chiplets need which data. It routes requests through the fastest available path. If direct paths are congested, it finds alternatives. This dynamic routing reduces average latency.

Similar approaches work in network switches and data centers. Applying packet-switching logic to GPU dies makes sense. The challenge is doing it fast enough to not add overhead.

Memory Controller Distribution

Monolithic GPUs have centralized memory controllers. All cores access memory through the same interface. Clean and simple.

Chiplet designs distribute controllers. Each chiplet might have its own memory interface. Or controllers sit on dedicated I/O dies. Either way, memory access becomes a routing problem.

The benefit is bandwidth scaling. More chiplets mean more memory controllers. Total memory bandwidth increases. But latency and access complexity increase too. Engineers trade off between bandwidth and latency.

Understanding these tradeoffs matters for workloads. Gaming favors low latency. AI training favors high bandwidth. Chiplet designs need to optimize for target use cases. For more on memory performance, see our GDDR7 analysis.

Power and Thermal Challenges

Chiplets change thermal profiles. Instead of one hot spot, you have multiple heat sources. Cooling solutions need to spread thermal loads evenly.

Interconnects also consume power. Data moving between chiplets uses energy. High-speed links like Infinity Fabric are not free. Total GPU power consumption might increase compared to equivalent monolithic designs.

This matters for build planning. Chiplet GPUs might need beefier PSUs and better cooling. Factor that into upgrade budgets. Check our PSU buying guide when planning.

Optimize Your Future Chiplet Build

Chiplet GPUs will change component pairing strategies. Get ahead by understanding current bottleneck patterns and how multi-die designs might shift them.

Industry Outlook (Where This Tech Is Really Heading)

AMD’s Commitment

AMD is all-in on chiplets. They proved the approach with CPUs. Now they are pushing it to GPUs. RDNA 5 is expected to be a full multi-chiplet architecture for consumer gaming.

UDNA is AMD’s future unified architecture. It will merge RDNA gaming and CDNA compute designs. Chiplets make this unification possible. Same chiplet building blocks, different configurations for gaming versus AI.

This strategy gives AMD flexibility. They can compete across market segments without designing entirely separate chips. Development costs drop. Time to market improves.

NVIDIA’s Cautious Approach

NVIDIA moves slower. They have the performance crown with monolithic dies. Why rush into chiplets and risk latency problems?

But NVIDIA is preparing. Patents show chiplet work. They are waiting for interconnect technology to mature. When latency hits acceptable levels, NVIDIA will deploy chiplets.

NVIDIA’s advantage is process node leadership. Their partnership with TSMC gives access to cutting-edge manufacturing. Yields are good enough on large dies. Chiplets are less urgent for them.

Intel’s Wild Card

Intel is the dark horse. Their Arc GPUs currently use monolithic designs. But Intel has decades of chiplet experience from CPUs. Meteor Lake and Arrow Lake CPUs use tile-based designs.

If Intel applies that expertise to GPUs, they could leapfrog competitors. Battlemage and Celestial architectures might incorporate chiplet approaches. Or Intel might wait for Druid, their future high-end architecture.

The wild card is Intel’s foundry. If they can manufacture GPU chiplets domestically, that is a strategic advantage. Less dependence on TSMC. More control over supply chains. For CPU-GPU pairing insights, see our Intel vs AMD comparison.

Market Impact

Chiplets could reshape GPU pricing. Better yields mean lower costs. That savings might pass to consumers. Or manufacturers might pocket the profit. History suggests a mix of both.

Competition will determine pricing. If AMD deploys chiplets first and undercuts NVIDIA, prices drop. If all manufacturers adopt chiplets simultaneously, prices might stay flat while performance increases.

For gamers, chiplets should expand options. More SKUs at more price points. Better availability since yields improve. Fewer paper launches and stock shortages.

The Bottom Line

Chiplet GPUs are coming. AMD leads the charge with RDNA 5 expected in 2025 or 2026. NVIDIA and Intel will follow once interconnect technology matures.

The benefits are real. Lower costs through better yields. Easier scalability across product lines. Potential for modular upgrades. But latency challenges remain. Gaming workloads demand low die-to-die communication delays.

For current buyers, do not wait. Available GPUs deliver solid performance without chiplet growing pains. Buy what works now. Upgrade later when chiplet designs prove themselves in real gaming scenarios.

For future planners, chiplet GPUs look promising. They should offer better value once mature. But set realistic timelines. Do not wait forever for technology that keeps getting delayed.

The smart move is staying informed. Monitor chiplet GPU launches. Read independent reviews. Avoid early adopter pitfalls. Let others beta test new architectures.

Most importantly, match technology to your actual needs. Chiplet GPUs are engineering marvels. But they only matter if they improve your gaming experience. Do not buy hype. Buy performance.

Make Smarter GPU Decisions

Whether you choose current monolithic GPUs or wait for chiplet designs, understanding system balance is critical. Check how any GPU pairs with your CPU and avoid costly mismatches.

Final Thoughts

Chiplet GPU technology represents a fundamental shift in graphics card design. The move from monolithic dies to multi-chiplet architectures mirrors the CPU revolution that AMD started with Ryzen.

We are watching history repeat itself. Early chiplet CPUs had teething problems. Latency issues. Memory quirks. Driver headaches. But AMD iterated. The technology matured. Now chiplet CPUs dominate.

GPUs will follow the same path. Current chiplet implementations have flaws. But engineers are solving them. Interconnects are getting faster. Smart routing reduces latency. Memory access is improving.

As PC builders, our job is staying informed and making smart decisions. Use chiplet knowledge to plan future upgrades. Understand the technology so you can evaluate real products when they launch.

The chiplet revolution is here. Not quite ready for prime time in gaming GPUs, but close. By 2026 or 2027, multi-die designs should be mainstream. Prices will drop. Performance will scale. Options will expand.

Until then, build with what works. Keep learning. Stay flexible. The best PC builders adapt to new technology without getting burned by hype. That is the real skill.

For ongoing hardware insights and practical build advice, bookmark our knowledge base. We cut through marketing nonsense and deliver real-world guidance. Because you deserve better than regret six months after buying a GPU.